Diving into the Ocean of Big Data

In the vast world of data, where mountains of information loom like giants, imagine a way where you can work with data effortlessly. This blog is about to our journey to finding a solution with a mission to simplify the process of managing vast volumes of big data and handling large datasets. We will also explore SyncLite, an impressive data consolidation platform that enabled us to achieve our goals. Let’s discover the power of simplicity in the face of data complexity.

ChiStats has developed a product forecastr.ai, a platform designed to help fund and investment managers improve their performance and achieve better returns while managing risks.

Forecastr.ai relies on a leading market player and data provider for daily market data. Each stock boasts a collection of rate variations known as strikes. Our task? To pick the most relevant strikes to subscribe to and, in turn, access real-time market data. Each strike corresponds to a unique number known as an instrument token.

The formula for calculating the number of subscribed tokens is quite straightforward:

n * m * e

Where:

'n' represents the number of subscribed stocks or indices,

'm' is the range of strikes

'e' stands for the expiry

We are able to cover a broad spectrum, targeting around 4000+ tokens per day by embracing 50 different stocks with around 30 instrument tokens for multiple expiries (50 * 30 * 3). To maintain data of all stocks Data Engineering Pipeline plays a crucial role.

Now, let's delve into the sheer volume of data we are dealing with. Each token yields around 1-3 data points every second, and each of these data points represents the current market situation of that token, which is represented in JSON format. This means that, at our target rate, we're receiving a minimum of 4000 data points every second. When you calculate this over the 6 hours of the stock market's active trading, it accumulates millions of records at the end of each day utilizing almost 20-30 GB. We need to capture this live data with low latency.

Interestingly, this vast number of records doesn't belong to a single category. We're actively monitoring nearly 50 different stocks and indices. Therefore, we have established separate database files for each of them, each with a similar schema containing multiple tables dedicated to options Call and Put data. Dealing with and sorting through this immense data demands dedicated time and effort. That's where SyncLite steps in, becoming an invaluable part of our journey.

What is a data consolidation platform?

It is a software solution designed to combine and manage data from multiple sources into a single, repository. These platforms are used by organizations to streamline the process of collecting, organizing, and making sense of data that may be distributed across various systems, databases, applications, and formats. Enable organizations to centralize, clean, and manage their data effectively, leading to improved decision-making and cost savings.

About SyncLite:

SyncLite is a real-time data consolidation platform; it integrates with applications across various devices, granting an SQL interface for transactional operations. It is designed with the goal of eliminating complex coding.

With SyncLite, real-time data replication and consolidation from diverse data-intensive applications across desktops, smartphones, and edge devices have become a breeze. Effortlessly channel data into one or more databases, data warehouses or data lakes of your choice, unlocking the potential for real-time analytics and AI-driven insights.

SyncLite allows users to access and work with data in real-time, even as it's actively being collected or updated. This feature offers many advantages. It's crucial to have access to data as it's produced.

Comprehensive Overview of Data Engineering Pipeline:

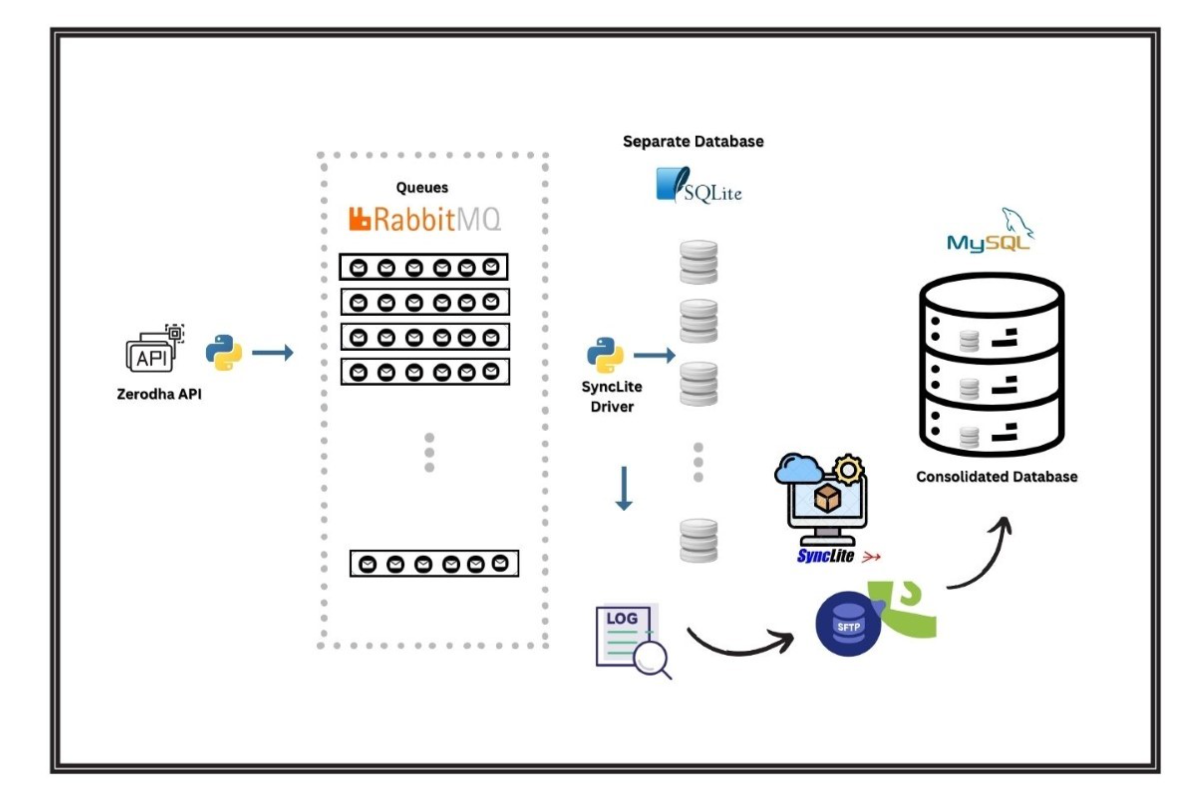

The data acquisition component developed using Python uses an Azure Virtual Machine to grab data. It continuously retrieves data from the Zerodha. This data then flows through Rabbit MQ queues, undergoes preprocessing, and is distributed to distinct SyncLite Appender devices. Each of these devices corresponds to a separate SQLite database file. SyncLite driver publishes logs generated in real-time to an SFTP stage server, which runs on a second Azure VM. Simultaneously, the SyncLite consolidator, operating on the same VM, continuously consolidates data from these devices into three separate databases, all hosted on an Azure MySQL database.

Real-time analytics are facilitated by an API that consumes data from these native SQLite database files, providing immediate insights into the trading data. To ensure data preservation, the SQLite database files created daily are periodically archived in Azure Blob Storage, ensuring their availability for future use.

This entire pipeline, inclusive of the data acquisition app, RabbitMQ queues, and SyncLite Consolidator, operates seamlessly under the supervision of a robust monitoring and alerting system powered by Prometheus and Grafana. While the described architecture suits our needs, we acknowledge that other technology alternatives, such as Apache Spark, Hadoop, Apache Kafka etc. are available and could be explored based on specific requirements and constraints.

It commences daily at 9:15 AM, running continuously until the market closes at 3:30 PM. What sets this system apart is its full automation. It has been operating flawlessly for several months, attesting to its reliability and efficiency. The best part is, it is designed in such a way that it can be scaled easily in terms of volume, velocity and variety of data.

This stored data serves as a valuable resource. Data Scientists can read and use data as it's collected to gain instant insights into ongoing processes. One of its primary functions lies in its utility for analyzing market dynamics. By analyzing this data, businesses and analysts can better understand market trends, consumer behaviour, and various economic indicators. This allows us to draw meaningful insights and make informed decisions. Such insights can range from identifying emerging opportunities and potential risks to design marketing strategies and product development. Moreover, the stored data opens a door for recognizing patterns and correlations through advanced data analytics and machine learning techniques.

In conclusion, this system unlocks the world of real-time data, providing exploration of market insights and a boundless source of opportunities. It has transformed our approach to handling big data, making it manageable and highly informative.